Table of Contents

Executable Digital Twins (xDT)

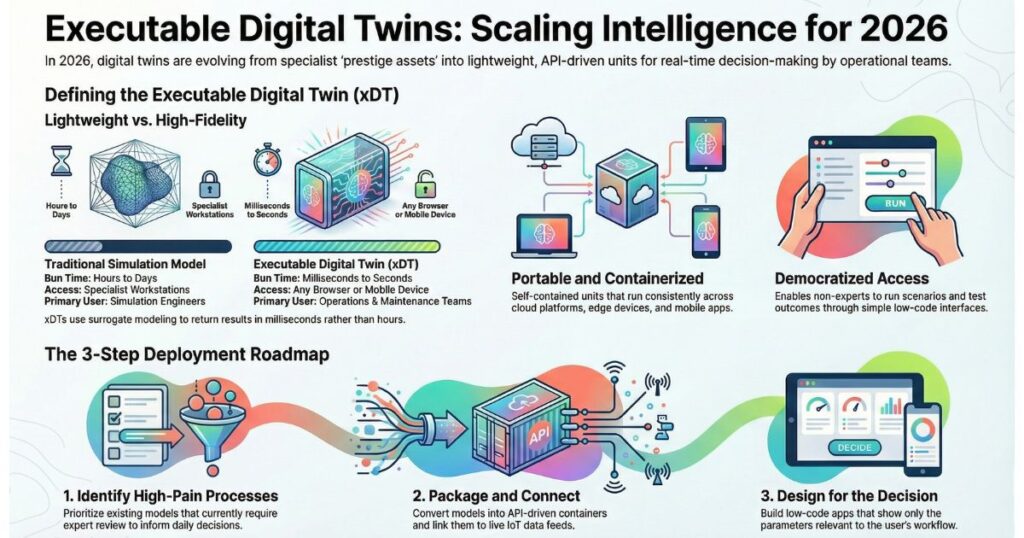

Executable Digital Twins (xDTs) represent a significant evolution in how organizations use digital twins.

While traditional digital twins are often complex, high-fidelity simulation models built and operated by specialists, an Executable Digital Twin is a lightweight, portable, and ready-to-run version designed for everyday operational use.

In simple terms, an xDT takes the essential intelligence of a physics-based model and packages it into a self-contained, API-driven unit — similar to a containerized microservice. It can be easily deployed across cloud platforms, edge devices, or integrated into dashboards and mobile apps.

Unlike conventional simulation files that require specialized software, deep expertise, and long run times, an Executable Digital Twin is:

- Portable — runs consistently anywhere without complex setup

- Lightweight — delivers fast results using surrogate modeling instead of full-scale simulations

- Configurable — allows non-experts to adjust parameters and run scenarios through simple interfaces

The result is a practical tool that brings simulation-grade insights directly to operations teams, maintenance engineers, and decision-makers — enabling them to test scenarios, predict outcomes, and make better decisions in real time, without needing to involve simulation specialists for every query.

xDTs are bridging the gap between advanced engineering models and daily operational reality, turning digital twins from occasional research assets into valuable everyday infrastructure.

Most organisations that invested in digital twins over the past few years built something impressive. They can describe the model’s accuracy, the technology stack underneath it, the specialist who validated it. What they struggle to answer is who used it last Tuesday, and what decision it actually informed.

That gap — between a twin that exists and a twin that works — is the defining challenge of intelligent system deployment in 2026. It applies equally whether the system in question monitors a manufacturing cell, optimises an energy grid, or supports a learning programme. The organisations pulling ahead are not the ones with the most sophisticated models. They are the ones that figured out how to put those models in the hands of the people who make daily decisions.

The Shift From Engineering Asset to Operational Tool

For most of their history, digital twins lived in two places: research papers and pilot-project presentations. Large manufacturers ran them on specialist workstations. Energy companies deployed them through consultancies, then left them largely untouched between scheduled reviews. The twin was a prestige asset — proof that an organisation took intelligent systems seriously — but rarely something the people who needed it most ever touched directly.

That pattern was sustainable when digital twin programmes were funded as innovation initiatives. It is not sustainable when those same programmes are five years old and finance teams are asking for a return-on-investment calculation. The question has shifted from “can we build one?” to “what has it changed?” For most organisations, the honest answer to the second question is uncomfortable.

That is changing, driven by financial accountability. Leadership teams that funded twin programmes four or five years ago are now asking for measurable returns. “We built a highly accurate simulation of our manufacturing cell” no longer satisfies. “We used that simulation to reduce unplanned downtime by 18% last quarter, and our operations team runs scenario checks every morning” does.

The distinction between a twin as an engineering artefact and a twin as an operational tool is precisely what Executable Digital Twins (xDTs) and low-code twin-app platforms are designed to close. At Twinlabs, this principle — that AI-assisted systems only create value when embedded in daily workflows — sits at the centre of how we approach intelligent system design. A model that lives in a specialist’s folder is not an operational asset. It is an expensive document.

What Makes a Digital Twin Executable

The term sounds straightforward, but it represents a meaningful architectural departure from conventional simulation models. Understanding the distinction matters, because the deployment failures that plague most digital twin programmes trace directly back to architecture decisions made at the outset.

A conventional digital twin is typically a collection of model files, boundary condition definitions, geometry data, and material libraries — tightly coupled to a specific software installation on a specific workstation. The model may be highly accurate. It is also non-portable, brittle, and inaccessible to anyone outside the expert team who built and maintains it. Running it requires knowing the tool, configuring the environment, setting up data pipelines manually, and interpreting raw outputs that arrive in specialist formats. Every use of the model depends on the availability and attention of a simulation engineer.

An xDT breaks that coupling. It packages the essential intelligence of the model into a containerised, versioned unit that can be deployed like a cloud service and accessed via a standard API by any downstream application. Three characteristics define it.

First, it is portable. Containerised and versioned, an xDT deploys consistently across development environments, cloud providers, and edge hardware without solver reinstallation or manual configuration. The same model that runs in a central cloud environment can be deployed to an on-site edge device in a remote facility.

Second, it is lightweight. Built on surrogate or model-reduction techniques, an xDT returns results in milliseconds rather than hours. A thermal model that previously required a multi-hour finite-element run can return a useful prediction in under a second. That latency difference is what makes real-time operational use possible.

Third, it is configurable by non-specialist users through defined interfaces. A maintenance engineer can adjust operating parameters and run a scenario without understanding the solver convergence criteria underneath. The expertise is encoded in the model; the user needs only to understand the decision they are trying to make.

The honest trade-off is fidelity. A surrogate model will not match a full physics simulation under extreme edge conditions. What it will do is capture 80–95% of the behaviour relevant to the decisions operational teams face every day. For the vast majority of daily use cases, that is sufficient — and the same logic applies to AI-assisted learning systems, where a well-calibrated model that learners actually engage with every day outperforms a theoretically perfect one that sits unused. The team at Twinlabs has built its approach to AI-powered learning on exactly this principle: accessible and embedded beats perfect and inert.

Why Low-Code Platforms Complete the Picture

An xDT packaged as an API is a powerful building block, but it remains a building block. The layer that converts it into something a maintenance supervisor, a sustainability manager, or a learning coordinator can use without technical assistance is the low-code app platform — what practitioners are calling the “twin-app engine.”

The concept is direct: instead of delivering a twin as a simulation file in a specialist environment, deliver it as an application that runs in a browser or on a mobile device, connects to live data sources, and presents outputs calibrated to specific operational decisions. The application abstracts all underlying model complexity behind a purpose-built interface. Users interact with the decision they need to make, not with the model that informs it.

A concrete example illustrates the difference. A maintenance engineer at a water utility opens a mobile app before the morning shift. She adjusts the incoming flow rate to reflect forecast demand and changes the ambient temperature parameter to account for the day’s weather. She runs a 24-hour scenario. The xDT returns failure probability curves and recommended inspection windows. She schedules two inspections directly from the app. She never opened a simulation suite. She never contacted the data science team. The entire interaction took four minutes. That is the twin-as-an-app model working as intended.

The underlying architecture is less exotic than it sounds. The xDT runs as a containerised service exposed behind a REST or GraphQL API. The low-code platform handles authentication, data ingestion, interface rendering, and result presentation. The bridge between them is a defined input-output contract — a schema that specifies what parameters go in and what outputs come out, along with confidence indicators and accuracy bounds.

This architecture also solves a persistent scaling problem. Multiple applications — for maintenance, energy management, regulatory compliance, capital planning, or in a learning context, for personalised content delivery, skills gap analysis, and learner progress monitoring — can all call the same underlying xDT. When the model is updated, every consuming application benefits automatically. No more proliferation of slightly different model copies living in different team folders. For organisations building AI-powered learning environments, this shared-service architecture is what makes intelligent personalisation at scale tractable without maintaining a separate model for every use case.

Why 2026 Is the Inflection Point

Technologies rarely break through in isolation. Several distinct pressures have converged this year to push xDTs from theoretical concept to mainstream adoption, and understanding those pressures helps organisations calibrate the urgency of their response.

The ROI imperative is no longer optional. Digital twin programmes that began as innovation initiatives in 2019 and 2022 are mature enough that finance and operations leaders expect concrete business outcomes. Organisations face a clear choice: embed twin-derived intelligence into actual workflows, or justify the ongoing investment to sceptical executives. xDTs make the embedding step tractable because they can be delivered as services that operational systems consume, rather than as research outputs that operational teams have to interpret manually.

Edge computing architectures are creating explicit demand for portable intelligence. In process industries, discrete manufacturing, and infrastructure management, the drive toward edge-computed intelligence requires models that can run close to physical assets. A pump health twin that requires a round-trip to a central simulation cluster will have latency measured in minutes. The same twin packaged as an xDT deployed at the edge can respond in under a second. As industrial IoT infrastructure matures, this deployment model becomes the default expectation, not an advanced capability.

Model reduction techniques have matured considerably. Physics-informed neural networks, proper orthogonal decomposition, Gaussian process surrogates, and other approaches have all seen significant advances in accuracy and reliability over the past three years. The practical gap between a high-fidelity full-order model and a well-constructed surrogate has narrowed to the point where, for most operational monitoring and prediction tasks, the surrogate is genuinely fit for purpose. The engineering confidence required to deploy these models responsibly now exists at scale.

Standardisation efforts are bearing fruit. Industry bodies have spent years converging on specifications for digital twin description, interfacing, and interoperability. The overall direction — toward API-first, format-agnostic, platform-interoperable twin definitions — is exactly the environment in which xDTs thrive. Organisations that align their twin architecture to these standards now will not be rebuilding from scratch in three years.

Four Steps From “We Have a Twin” to “Our Twin Runs Operations”

Early adopters have converged on a reliable implementation sequence. It consistently outperforms the common alternative — building a perfect model first and figuring out deployment later — which has a poor track record regardless of how sophisticated the underlying twin is.

Step 1: Identify a High-Pain, Model-Ready Process

Look for a process where some form of simulation, analytical model, or rule-based prediction already exists. The goal is not to build a new twin from scratch — it is to make an existing one executable and accessible. Prioritise processes where operational teams currently rely on informal intuition or periodic expert reviews to make decisions that a model could support every day. The gap between “what the expert knows” and “what the person on shift can access” is the gap an xDT closes.

Predictive maintenance routines, energy-optimisation calculations, yield prediction logic, and thermal management models are all strong candidates. The common characteristic is that a model exists, it has been validated to some degree, and there is a clear operational decision it could inform if only it were faster and more accessible.

Step 2: Package the Model as an xDT

Apply model-reduction techniques appropriate to the physics domain — surrogate modelling, proper orthogonal decomposition, neural network emulation, or physics-informed machine learning. Wrap the reduced model in a containerised service with a documented API contract: defined inputs, defined outputs, defined confidence indicators, and explicit accuracy bounds. Version it. Store it in a model registry alongside validation reports that document exactly where the model is reliable and where it is not.

This step is the most technically demanding, and it is the foundation everything else rests on. Cutting corners here — deploying a surrogate without proper validation documentation, or failing to define accuracy bounds — creates governance problems that compound over time. The practical implementation guides at 1 Hour Guide include frameworks for structuring this kind of technical documentation in a form that non-specialist stakeholders can interrogate and approve.

Step 3: Connect to Live Data and Build the Twin App

Select a low-code platform capable of connecting to your operational data sources — IoT sensors, SCADA historians, CMMS records, ERP systems. Define the data-ingestion logic that translates live operational readings into the input parameters your xDT expects. Build the interface around the specific decisions the target users need to make. Expose only the parameters that are operationally relevant to that user group. The goal is decision support, not model exploration.

This is where most implementations either succeed or fail. Teams that design the app around the model — showing users everything the model can do — produce tools that confuse and alienate operational staff. Teams that design the app around the decision — showing users only what they need to make a specific call — produce tools that get used every day. The difference is not technical. It is a design discipline that requires deep understanding of how operational teams actually work, which is why involving end users from the earliest stages of app design is non-negotiable. For teams without a structured approach to this kind of user-centred implementation, AI Coach provides advisory support specifically for organisations integrating AI-driven decision tools into operational workflows.

Step 4: Run a Focused Pilot and Iterate

Deploy with one operations team. Measure three things: does the model’s output align with observed outcomes (accuracy), do users actually open and use the app (adoption), and do decisions informed by the app produce better outcomes than the baseline (impact). These three metrics tell you everything you need to know about whether the deployment is working.

Expect to iterate significantly on the interface in the first three months. Operational users are expert critics of tools that do not fit their workflow, and their feedback — if gathered systematically — is invaluable for improving both the app and the underlying model. A 90-day pilot that generates rich user feedback and results in a substantially improved tool is far more valuable than a six-month pilot that delivers polished dashboards without measuring whether anyone uses them.

Before committing resources to a full pilot, run a quick readiness assessment. Is the underlying model stable and sufficiently validated? Are the required data feeds reliable and consistent enough to power a live application? Are the latency requirements of the use case compatible with a surrogate-based approach, or does the decision genuinely require full-order simulation? Answering these questions honestly before committing to a deployment timeline saves significant rework. The step-by-step readiness frameworks at 1 Hour Guide provide a structured approach to this kind of pre-deployment assessment.

Democratising Simulation-Grade Intelligence — What It Actually Means

The phrase “democratising digital twins” is used frequently and loosely. It is worth being precise about what it means in practice, because the misunderstanding of this concept leads to both over-investment and under-governance.

What democratisation means: for the first time, the predictive power of physics-based simulation can reach the people who make daily operational decisions, without those people needing to understand finite element analysis or solver convergence. Plant managers can run stress scenarios. Facility operators can test energy-efficiency interventions against a digital model before committing physical resources. Sustainability leads can model the carbon impact of operational changes and present numbers that finance teams can act on.

What it does not mean: simulation experts become redundant. Quite the opposite. Democratisation of xDT consumption creates a more valuable role for engineers and data scientists — designing models, validating them rigorously, packaging them correctly, defining appropriate use boundaries, and overseeing ongoing performance. The expert’s contribution shifts from “I run simulations for people who ask me” to “I build and govern the intelligence infrastructure that the entire organisation uses.” That is a strategic upgrade. The same pattern holds in AI-assisted learning contexts, where the role of the learning designer shifts from content creation to learning intelligence architecture — a transition that AI Coach supports through its advisory programmes for organisations building AI capability at the leadership level.

For operations and technology leaders, the xDT-plus-low-code model offers a compelling proposition: deploying simulation-informed operational tools across multiple business units without assembling a dedicated simulation team in each one. A single team of twin engineers maintains a portfolio of validated xDTs. Business units consume those xDTs through purpose-built apps, customised for their specific workflows. The economics improve dramatically when the model is a shared service rather than a bespoke per-department engagement.

The feedback loop this creates is also worth noting. When more users interact with a twin app daily, they generate rich observations about where the model’s predictions align with reality and where they diverge. That feedback accelerates model validation and refinement in ways that periodic expert reviews cannot match. Daily operational use is, in effect, a continuous validation programme.

The Governance Problems You Cannot Afford to Ignore

Enthusiasm for xDTs should not obscure their real limitations. Three governance failures account for the majority of xDT deployments that produce confident wrong answers — and each of them is entirely preventable with the right disciplines in place from the outset.

Fidelity boundaries are real. xDTs are not appropriate for ultra-high-fidelity physics studies, novel failure mode analysis, or R&D investigations where full model resolution is essential. Misapplying a surrogate model to a question that requires its high-fidelity counterpart produces plausible-looking results that are simply incorrect. Every xDT deployment must carry a clear, accessible statement of what the model is designed for and what it is not — and that statement must be visible to users, not buried in technical documentation.

Validation documentation is non-negotiable. Every xDT deployment must carry documented evidence of the conditions under which the model was tested, the accuracy metrics achieved, and the explicit bounds outside which results should not be trusted. Without this, users have no basis for calibrating their confidence in what the model returns. Organisations that treat validation as a box-ticking exercise rather than a genuine quality gate will eventually face the consequences.

Input guardrails protect the model and the user. Applications that expose model parameters to non-expert users must include validation logic that prevents physically implausible configurations. Without guardrails, a user can inadvertently extrapolate well beyond the model’s validated range and receive a prediction that looks credible but is not. This is not a hypothetical risk — it is a documented failure mode in multiple early xDT deployments.

The broader cultural risk is the most insidious of all: a polished mobile interface inspires confidence that may not be warranted by the model underneath. Visual quality is not a proxy for analytical rigour. A well-designed dashboard presenting unreliable predictions is more dangerous than a poorly designed one, because users are less likely to question what looks authoritative. Organisations deploying AI-assisted operational tools — whether digital twins, learning analytics systems, or other intelligent platforms — benefit from establishing governance frameworks before rolling out at scale. This is precisely the kind of structural advisory work that AI Coach undertakes with organisations building responsible AI capability.

Building for Operationalisation From Day One

The organisations that have extracted the most value from digital twin investments share one characteristic: they designed for operationalisation from the beginning. They did not build a perfect twin and then figure out how to deploy it. They engaged operations teams during model development, defined the decisions the model would inform before writing the first line of solver code, and treated deployment as a design constraint rather than an afterthought.

For organisations just beginning their digital twin journey, the xDT-plus-low-code model offers a valuable lesson in sequencing. Build with the eventual packaging as an executable service in mind. Select model-reduction techniques that preserve the accuracy needed for operational decisions, not maximum possible fidelity. Involve the people who will use the tool in defining what the tool needs to show them. The “build a perfect twin first, then figure out how to use it” approach has a poor track record. The “build a good-enough twin that operations teams actually use every day” approach is what produces lasting organisational capability.

This principle extends beyond industrial digital twins. The same sequencing logic applies to AI-assisted learning platforms, where the temptation to build a comprehensive, feature-rich system before engaging learners is equally strong and equally counterproductive. Twinlabs applies this build-for-use-first philosophy across its AI-powered learning design work, treating learner engagement as a design input from day one rather than a deployment outcome to be optimised later.

The Bottom Line

The technical barriers to operational digital twin deployment have largely been resolved. Model reduction, containerisation, cloud-native deployment, and low-code platforms have all matured to the point where implementation is tractable for most mid-sized organisations. The remaining barriers are organisational: the will to embed twin-derived intelligence into actual workflows, the discipline to govern those deployments rigorously, and the design thinking to build tools that operational teams actually want to use.

The question every digital twin leader should ask is a direct one: how many people in this organisation actually use our twins to make decisions, how often, and in what workflows? If the honest answer is “mostly just the engineering team, occasionally” — the path described here is how to change that. Building systems that people actually use, every day, at scale, is what Twinlabs is designed to support — and it is the only measure of a digital twin programme that ultimately matters.