Table of Contents

The idea of externalising human memory is not new. Vannevar Bush imagined it in 1945 with the Memex — a hypothetical desk device that would store everything a person knew and let them navigate it by association rather than by index. Niklas Luhmann built a physical version in the 1950s with his Zettelkasten — 90,000 index cards, cross-referenced by hand over four decades, that produced 70 books and more than 400 academic papers. David Allen systematised the principle in 2001 with Getting Things Done — a trusted external system designed to free the mind from remembering so it could focus on thinking. Tiago Forte synthesised all of it in 2022 into the Second Brain — a digital, structured, compounding knowledge system that anyone could build. Millions tried. Most abandoned it within months. Not because the idea was wrong, but because the maintenance burden eventually overwhelmed the value. Andrej Karpathy solved that problem in April 2026 with a single architectural shift. This article traces that full arc — from Bush’s desk to Karpathy’s markdown folder — and shows you how to build the version that actually survives.

The Long History of the Second Brain

The desire to build an external memory system is as old as writing itself. What changed across the twentieth century was not the ambition but the architecture — each generation produced a more structured, more navigable version of the same fundamental idea.

Vannevar Bush published his essay As We May Think in the July 1945 edition of The Atlantic. In it, he described a device he called the Memex — a mechanised desk fitted with microfilm storage that would hold a person’s complete library of books, records, and communications. The critical feature was not storage but navigation. Bush argued that the human mind does not work by index. It works by association — one thought triggering another through a web of connections built from experience. The Memex would replicate that associative structure, allowing a user to create permanent trails between documents, so that finding one idea would surface the ideas connected to it. Bush’s vision was precise and prescient. What he could not solve was the question of who builds and maintains those trails.

Niklas Luhmann answered that question, at least for himself, with the Zettelkasten. A German sociologist working from the 1950s until his death in 1998, Luhmann built a physical card-based knowledge system that is now studied as one of the most productive intellectual tools ever devised. Each card in his Zettelkasten held a single idea. Each idea was linked by hand to related ideas across the full collection. Over forty years, the system grew to 90,000 cards and generated an extraordinary body of work — 70 books, more than 400 academic articles, and an influence on systems theory that persists today. Luhmann credited the Zettelkasten directly: he described it not as a filing system but as a thinking partner, a structure that surfaced unexpected connections and generated ideas he would not have reached through linear reading alone. The cost was the maintenance. Luhmann spent significant portions of every working day filing, cross-referencing, and updating the system. For most people, that cost was prohibitive.

David Allen shifted the frame in 2001. His Getting Things Done methodology was not primarily a knowledge system — it was a productivity system built on a single psychological insight: the mind performs poorly when it is used as a storage device. Every open loop, every uncommitted task, every half-formed idea held in working memory consumes cognitive resources that could be used for actual thinking. The solution was a trusted external system — a complete capture of everything requiring attention, organised into projects, next actions, and reference material. Allen’s system externalised the cognitive overhead of remembering so the mind could focus on doing. Getting Things Done spread globally, generated an industry of tools and consultants, and introduced millions of people to the principle that the mind is for having ideas, not for holding them.

Tiago Forte took that principle and extended it from task management to knowledge management. His Second Brain methodology, developed through his Building a Second Brain course from 2017 and crystallised in his 2022 book of the same name, proposed a complete digital knowledge system organised around four operations he called CODE — Capture, Organise, Distil, Express. The system was built for the digital age: notes in Notion or Obsidian, highlights from Kindle, clippings from the web, all organised into a structure that would compound over time. Forte’s insight was that most knowledge workers are already capturing enormous quantities of information — the problem is that none of it connects. The Second Brain was designed to make the connections permanent and navigable. The response was significant. Hundreds of thousands of people enrolled in his course. The book became a bestseller. A global community of practitioners built tools, tutorials, and variations on the methodology.

Why Every Second Brain Eventually Dies

The failure mode of every personal knowledge system is identical, whether it is a physical Zettelkasten, a Notion database, an Obsidian vault, or a Forte-style Second Brain. The system starts well. The first weeks of capture and organisation are satisfying — the knowledge base grows, connections emerge, the graph view fills with nodes. Then the maintenance load begins to accumulate.

Every new source added to the system requires not just filing but integration. A new article about machine learning does not just need a summary page. It needs its key claims compared against existing pages on related topics. It needs backlinks created from relevant concept pages. It needs any contradictions with previously filed material flagged and resolved. It needs the master index updated. For a system with 20 sources, this takes minutes. For a system with 200 sources, it takes hours. For a system with 500 sources, it becomes a job.

The asymmetry is structural. The value of a knowledge system grows with the number of connections between ideas — which means the value grows roughly with the square of the number of sources. But the maintenance burden grows linearly with each new source added. In the early stages, value outpaces cost. Past a certain threshold — different for every person but always present — cost outpaces value. At that point, most people stop adding new material. The system fossilises. Within months it is outdated. Within a year it is abandoned.

This is not a discipline problem. It is an architectural one. Luhmann could maintain his Zettelkasten because he had no other professional obligations beyond academic writing and the Zettelkasten itself. Allen’s system manages tasks, not knowledge — the maintenance burden is lower because tasks complete and disappear. Forte’s CODE methodology is elegant, but it depends on a human performing the Distil and Organise steps consistently, indefinitely, at increasing scale. That dependency is the single point of failure.

Bush identified the vision in 1945 but was honest about the gap. He described the Memex as a tool that would need to be populated and maintained by the user. He had no answer for who would do that work or how the trails between documents would stay current as knowledge evolved. The vision was right. The maintenance question remained open for eighty years.

Karpathy’s Architectural Shift: The LLM as Librarian

On 3 April 2026, Andrej Karpathy — co-founder of OpenAI, former head of AI at Tesla, and founder of Eureka Labs — posted on X that a significant fraction of his recent AI usage had shifted away from code generation toward knowledge management. He described a system where raw research materials were dropped into a folder, an LLM processed them, and the result was a structured, interlinked wiki that grew richer with every source added. His research wiki on a single topic had reached approximately 100 articles and 400,000 words — longer than most doctoral dissertations — without Karpathy writing a single word of it directly.

The following day he published a GitHub gist laying out the full architecture. It has since accumulated over 5,000 stars and spawned dozens of community implementations.

The core insight is a shift from retrieval to compilation. In a standard RAG system, documents are stored and retrieved at query time — the AI rediscovers relevant content from scratch every time a question is asked. In Karpathy’s system, documents are compiled once into a structured wiki. The synthesis is done at ingestion, not at query time. The result is persistent. Every subsequent query draws on pre-built, pre-connected knowledge rather than fragmented retrieval.

Karpathy’s metaphor is precise: Obsidian is the IDE, the LLM is the programmer, the wiki is the codebase. The human reads the wiki. The LLM writes and maintains it. The human’s job is curation and judgement — deciding what to read, asking the right questions. The LLM’s job is everything else: summarising, cross-referencing, filing, linting, logging.

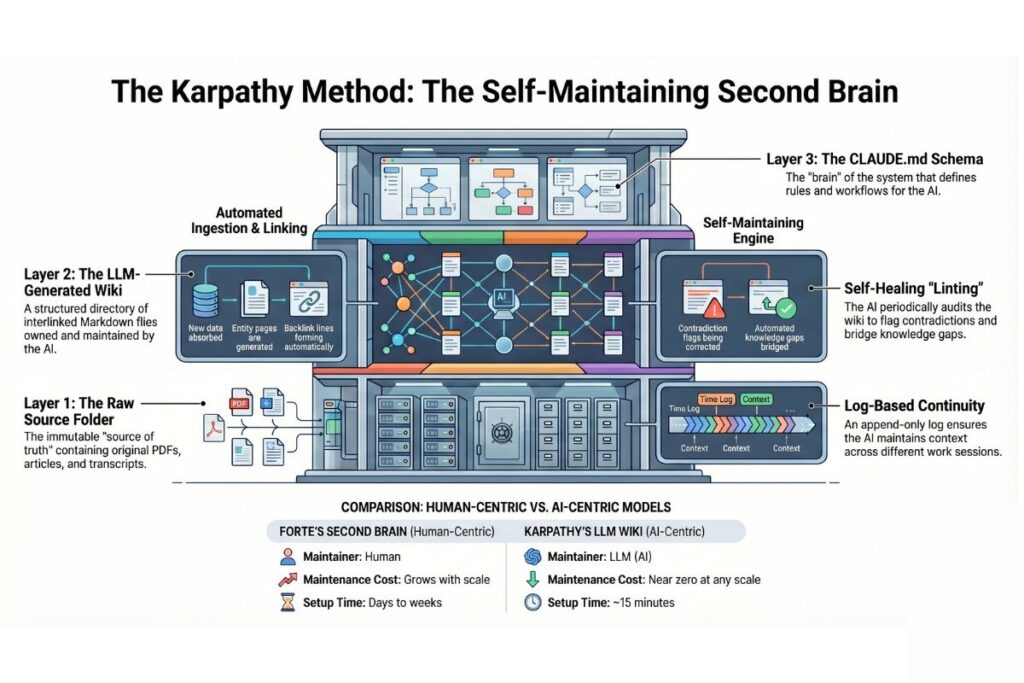

The architecture has three layers that must be kept strictly separate. The raw sources folder is the immutable source of truth — articles, PDFs, transcripts, screenshots. The LLM reads from this folder and never modifies it. The wiki is a directory of LLM-generated markdown files: entity pages, concept summaries, a master index, a log. The LLM owns this layer entirely. The schema is a file called CLAUDE.md at the root of the vault — it defines the conventions, workflows, and folder structure the LLM must follow in every session. Without this file, the AI reverts to being a generic chatbot. With it, it becomes a disciplined wiki maintainer that can re-orient itself completely at the start of any new session.

The choice of plain markdown is deliberate. Markdown files are human-readable, portable, version-controllable with git, and require no proprietary database or infrastructure. At the scale of 100 to 500 sources, a well-structured markdown index outperforms vector database RAG pipelines on both cost and accuracy. The entire compiled knowledge base fits within the context window of a modern long-context model. Tools like Twinlabs apply this same principle to AI-assisted learning architecture — structured, portable knowledge that compounds rather than disperses.

The maintenance problem that killed every previous second brain is solved not by making humans more disciplined but by replacing the human in the maintenance loop entirely. LLMs do not get bored. They do not forget to update a cross-reference. They do not deprioritise filing when a deadline approaches. The cost of maintenance drops to near zero, which means the system can scale indefinitely without the structural failure mode that ended every previous attempt.

The 4 Operations That Make the Second Brain Self-Maintaining

The wiki evolves through four primary operations. Each produces durable output. None disappears into chat history.

Ingest

A new source is dropped into the raw folder and the AI is instructed to process it. The AI reads the document in full, identifies key topics, checks the master index for existing pages, and begins updating. A single source can touch 10 to 15 wiki pages in one pass — the source summary page, entity pages for people and organisations mentioned, concept pages for ideas developed or challenged, and the master index. New backlinks are created. Contradictions with existing claims are flagged on the relevant pages.

This is Forte’s Capture and Organise steps, automated. The human’s role in ingestion is reduced to dropping a file into a folder. The AI handles everything that previously required the human to stay engaged.

Query

Questions are asked against the wiki rather than against raw documents. The AI reads the master index first, identifies relevant pages, drills into them, and synthesises an answer with citations to specific wiki pages. The answers are grounded in compiled knowledge rather than fragmented retrieval. For organisations using AI Coach to build AI-assisted workflows, query is where the system pays back its setup cost — every question draws on the full accumulated knowledge base, not just the most recently added sources.

The discipline that distinguishes this system from ordinary chat is filing. When a query produces a substantive response — a comparative analysis, a synthesis of competing views, a decision framework — that response becomes a new wiki page. The act of asking questions makes the knowledge base richer. Forte called this the Express step; in Karpathy’s system, the best expressions are filed back into the wiki automatically.

Lint

Periodically, the AI is asked to audit the entire wiki. The scope is specific: find contradictions between pages, identify claims that newer sources have superseded, surface orphan pages with no inbound links, flag important concepts mentioned across multiple pages that do not yet have a dedicated page, and identify gaps where a targeted search would fill a known hole. Linting is what keeps the wiki trustworthy over time. Without it, early claims persist on old pages long after they have been revised elsewhere. With regular linting, the system heals itself.

Log

Every operation is recorded in an append-only log.md file. Each entry is timestamped and prefixed consistently so the log can be queried with simple tools. The log serves two purposes: it gives the human a timeline of how the wiki has evolved, and it gives the AI orientation at the start of a new session — it reads recent log entries and understands what was done last time. This is the feature that solves the context-reset problem that every LLM user encounters. The session may end, but the log ensures the next session begins with full continuity.

Practical guides on structuring these four operations for business use are available through 1 Hour Guide — step-by-step frameworks that translate the technical pattern into operational workflow.

How to Build It

The setup requires no specialised infrastructure. Three tools, one folder structure, one schema file.

- Download Obsidian from obsidian.md and create a new vault. This is simply a folder on your computer. Everything in it is plain markdown. Obsidian provides the graph view, the backlink panel, and the visual navigation layer — it is the reading interface for the wiki.

- Open the vault in Claude Code. Navigate to the vault folder in your terminal and run

claude. This gives the AI direct read-write access to all vault files. Claude Code is the engine that processes sources, maintains the wiki, and executes all four operations. - Bootstrap the schema. Copy Karpathy’s full gist from his GitHub and paste it into Claude Code with the instruction: “You are my LLM wiki agent. Implement this pattern as my complete knowledge base. Create the

CLAUDE.mdschema file and full folder structure.” Claude Code will generate the raw, wiki, and schema directories along with theindex.mdandlog.mdfiles. The schema is the most important output — it is what makes the system consistent across sessions. - Install the Obsidian Web Clipper browser extension. Set its default save location to your raw folder. This replicates Forte’s Capture step with zero friction — any web article is saved as clean markdown in one click, ready for the next ingestion pass.

- Enable local image storage. In Obsidian settings, set the attachment folder path to

raw/assets/and bind a hotkey to “Download attachments for current file.” After clipping any image-heavy article, the hotkey downloads all images to local disk so the AI can reference them directly rather than relying on external URLs. - Run the first ingestion. Drop a source into the raw folder and instruct Claude Code: “Process the new source in raw/ and update the wiki.” Watch the wiki folder populate in Obsidian’s file explorer. Open the graph view. The first connections are already there.

From the first ingestion the system is operational. Each subsequent source compounds what is already built. The Twinlabs approach to knowledge architecture applies directly here — the value is not in any single source but in the structure that connects them. AI Coach provides advisory support for teams configuring Claude Code for persistent organisational workflows if a more guided setup is needed.

Forte vs Karpathy: What Changed and What Did Not

Forte and Karpathy are solving the same problem from different positions. Forte approached it as a productivity practitioner — his system is designed to be accessible, humanistic, and applicable without technical knowledge. Karpathy approached it as an AI engineer — his system is designed to be scalable, self-maintaining, and technically precise. The differences are real, but so is the continuity.

What Forte got right, and what Karpathy builds on directly, is the architectural insight that knowledge must be actively synthesised rather than passively stored. Both systems reject the idea that simply collecting information is sufficient. Both insist on connection — Forte through his PARA organisation system and progressive summarisation, Karpathy through the LLM’s cross-referencing and backlink maintenance. Both recognise that the value of a knowledge system lies not in any individual note but in the relationships between notes.

What Karpathy changed is the maintenance model. Forte’s system requires the human to perform the Organise and Distil steps consistently. Karpathy’s system delegates those steps entirely to the LLM. That single change removes the structural failure mode that caused most people to abandon Forte’s approach.

| Forte’s Second Brain | Karpathy’s LLM Wiki | |

|---|---|---|

| Who maintains it | Human | LLM |

| Maintenance cost | Grows with scale | Near zero at any scale |

| Technical requirement | None | Claude Code, basic terminal |

| Best for | Personal productivity, creative work | Research, competitive intelligence, team knowledge |

| Failure mode | Maintenance abandonment | Schema drift, hallucination propagation at scale |

| Setup time | Days to weeks | 15 minutes |

| Knowledge compounds | Yes, if maintained | Yes, automatically |

The practical conclusion is that Forte’s methodology and Karpathy’s architecture are complementary rather than competing. Forte’s CODE framework — Capture, Organise, Distil, Express — maps directly onto Karpathy’s four operations. Capture maps to the Web Clipper and raw folder. Organise and Distil map to ingest and lint. Express maps to query with filing. The human insight is Forte’s. The maintenance infrastructure is Karpathy’s. Together they produce a system that is both thoughtful and sustainable.

The audience for each differs. Forte’s system suits knowledge workers who want a personal productivity practice and are willing to invest the maintenance time. Karpathy’s system suits researchers, engineers, and business operators who need a knowledge base that scales beyond what any individual can maintain manually. For teams — where the maintenance burden is distributed across multiple contributors and the knowledge base must stay consistent — Karpathy’s approach is the only practical option. 1 Hour Guide covers practical implementation paths for both individual and team knowledge systems.

The Bottom Line

Bush described the problem in 1945. Luhmann solved it for himself at enormous personal cost. Allen reduced the cognitive overhead but did not address knowledge accumulation. Forte built the most accessible version of the idea yet and gave it a name that spread globally. Karpathy answered the one question that none of them could: who does the maintenance.

The answer is the LLM. Not because LLMs are infallible — they are not, and a poorly designed schema or an unreviewed ingestion can embed errors that propagate through the wiki. But because the cost of LLM maintenance is low enough that the system can sustain itself at a scale no individual human could manage alone. The structural failure mode that killed every previous second brain — the point where maintenance cost exceeds knowledge value — no longer applies when the maintenance is automated.

The vision Bush articulated eight decades ago is now buildable in 15 minutes with a folder of markdown files, a free note-taking application, and an AI agent running in a terminal window. The compounding that Forte described — knowledge that grows richer with every source added and every question asked — is now achievable without the discipline burden that made it theoretical for most people.

The second brain that actually survives is not the one you maintain. It is the one that maintains itself.